Link Crawler is a free link checker tool which you can use in order to check the status of all the links on a website, to make sure that they are online, that they are not dead. Link Crawler can be used on all the major operating systems, Windows, Linux and Mac which is made possible by the fact that this free link checker tool is a Java application, so make sure that you have Java runtime environment installed before running it.

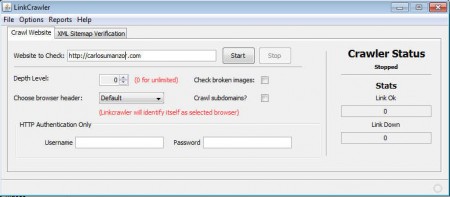

What you see on the image above is the website crawl dashboard where you get to setup the link checker. URL of the website has to be entered in the Website to Check field and one of the things that we noticed is that you cannot copy and paste addresses in the URL field, we suggest that you edit the existing one with the URL that you are interested in checking. Some of the more interesting things that you can do with Link Crawler are:

- Check to see the status of links on your web page

- Check both internal and external links

- Can search images also, img tag, to detect image which are down

- Check up to 1000 links per page

- Export results in a HTML or EXCEL document

- Validate XML sitemap for search engine submission

- Crawl sub-domains

- HTTP authentication included

We didn’t mention this but Link Crawler is a portable application, you don’t have to install it, just download, extract and run the run.bat file found in the application directory, after which you should see the link checker dashboard where you can setup your first website link crawl.

Similar software: Broken Link Checker.

How to check website links with Link Crawler

First thing that you need to do we’ve already mentioned, enter the URL of the web page that you’re interested in checking. After that, you should go over all the different options that Link Crawler has:

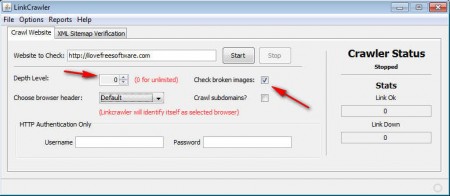

If next to links you also want to check images, you should put a check mark next to Check broken images box. Most important option that you need to set would be the Depth Level, this determines if links of links are gonna also be checked, don’t leave this on if you have a large website because the crawl is gonna take a long time. Once everything is setup, click on Start and the scanner should do it’s thing.

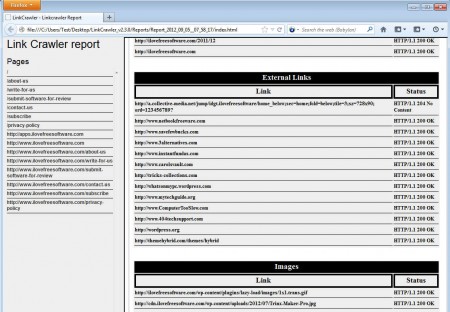

Best way to read results would be if you export them into an HTML document, you can do this by clicking on Reports and then selecting Export to HTML. On the image above you can see that links are divided on internal, external and images, and of course there’s also a division on all the pages which were found on the submitted URL.

Conclusion

Having dead links on a website is never a good thing, but checking each one of them individually isn’t an easy task, at least not easy if you are using Link Crawler. Simply enter the URL that you want to check, select what kind of crawl you’re interested in and select Start, Link Crawler will do the rest on it’s own. Application is free, try it and see how it can help you out.