Airbyte is a free self-hosted data synchronization software for databases and warehouses. It offers you simultaneous data pipelines that you create with the prebuilt connectors. With the help of connectors, you can connect databases and APIs to a data warehouses like BigQuery, PostgreSQL, Snowflake, etc. You just configure the connectors and then let it do the heavy lifting. You can sync data on demand or on a specified time interval automatically. It even lets you specif what data tables from source, you ant t syn in the target. You can create many workflows in it and it will keep syncing the data.

If you have some data sources that you want to sync to some other databases on large scale then Airbyte is for you. Here you can quickly create efficient data pipelines for free and let them work on their own. However, this is a very heavy software and you need to run it on a computer with higher specifications. Or, if you want to install it on a server then make sure it is fast and can handle the multiple heavy data pipelines. The installation process is easy as it uses Docker and you can get it up and running in just a few minutes.

Free Data Synchronization Software for Databases & Warehouses: Airbyte

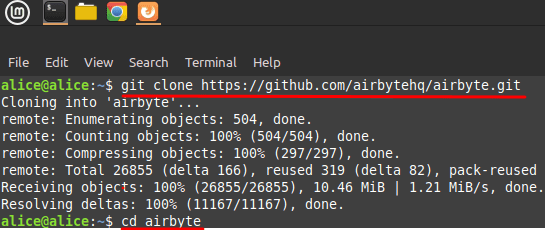

If you are a programmer or DevOps expert then you will love this tool. It supports data connectors for Salesforce, Hubspot, Google Sheets, CSV, GitHub, PostgresSQL, MySQL and many others. I will recommend you to install it on a Linux server that has Docker on it already along with Docker-Compose. Next, you just fire up the terminal and then clone the GitHub repository and then cd into it.

git clone https://github.com/airbytehq/airbyte.git cd airbyte

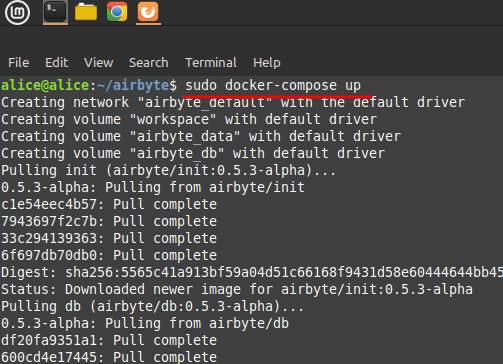

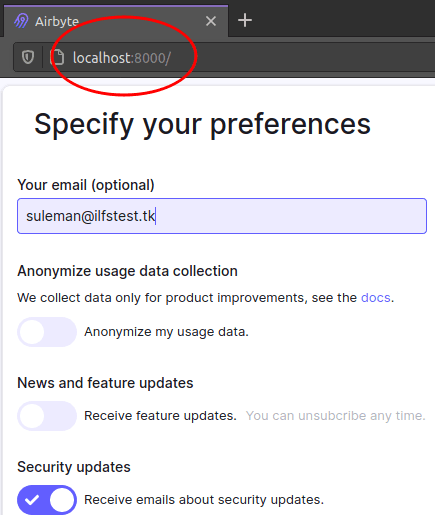

Now, just run the following command and then it will start building the container. It will take some time when you see Airbyte logo in the terminal then you can access it in the browser on your PC. The web UI of Airbyte can be accessed on localhost:8000. Just see the following screenshot. Now, you just enter your email address there and then get started.

docker-compose up

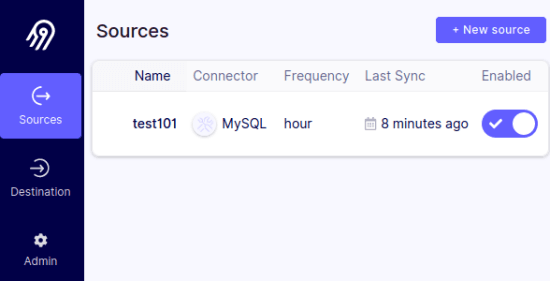

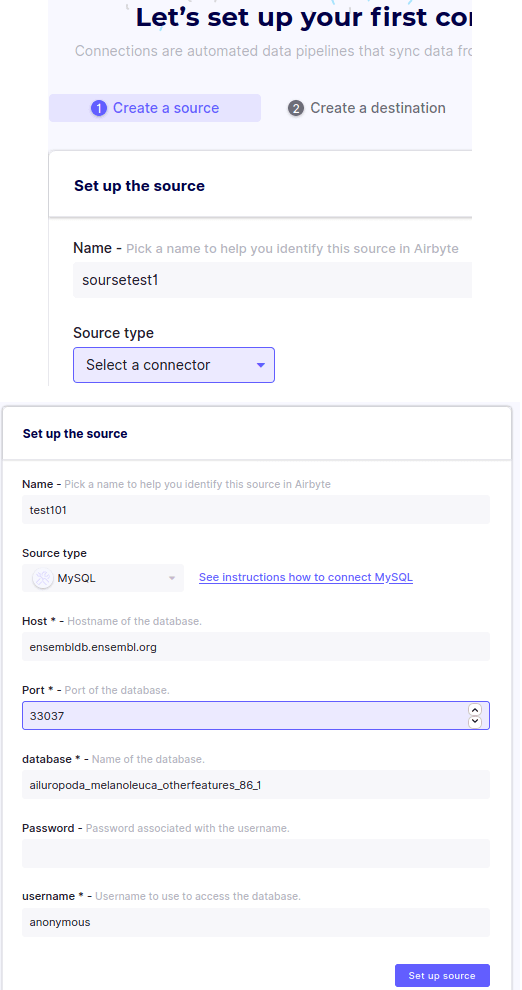

Initially, you start by creating your first data pipeline. To do that, you just configure the data source first. Choose the connector from the list based on where you want to fetch data from. There are enough source data connectors that you can choose from the list and then configure them accordingly. In my case, I am using MySQL database so if you select some DBMS then specify the credentials there.

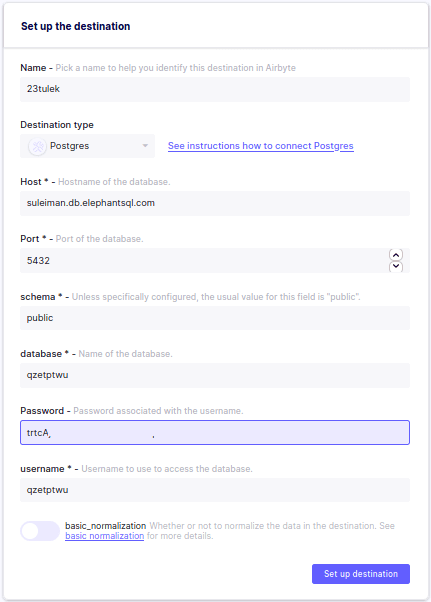

After you have specified, you just specify the destination. A destination is typically a data warehouse endpoint where it will keep syncing the data. It supports all the major data warehouse tools that you can select from the list. And again you have to specify the credentials there. For destinations, it only supports 4-5 services and I hope there will be more in the next updates.

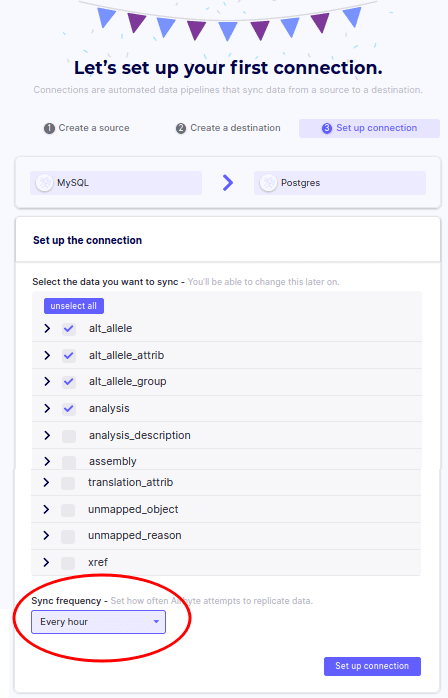

Now, after specifying the destination, it will verify the connection and successful verification, it will list the tables from the source. You can select all or just select the ones you want to sync. And along with this information, you specify the syncing frequency. You can opt to sync data as early as 5 minutes.

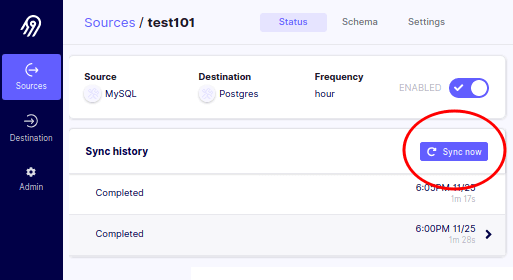

Now, your connector is created and you can see the stats on the dashboard. Similarly, you can create other connectors and it will list them all on the main UI. If you want to sync a connector on demand then you can use the sync now button there. This is as simple as that.

In this way, you can use Airbyte to create efficient data pipelines to sync databases and APIs. You can create unlimited workflows here, and they will run on the specified time interval. You can change the settings of any workflow anytime and then change will take effect accordingly. This is an open source project as well and extensible. If you think that a connector is missing then you can create one if you have programming skills.

Final words:

If you are looking for free and unlimited data synchronization tool then Airbyte is the best option. You can either run it locally or host it on a server. It is open source as well so you can take part in its development. I liked the pre-built data connectors that it offers and the way it works. You can manage heavy data with it easily and put data syncing on autopilot. It can even work with APIs and you can even dump data to a file as well such as CSV. The process to get started is already mentioned above, so just give it a try and let me know what you think.